The MLOps Playbook: 6 Best Practices for Success in 2026

Machine Learning Operations, or MLOps, isn’t just another fancy acronym making the rounds. It's a revolution, a game-changer that’s becoming a fixture of every data-centric strategy.

If you’re wondering, “Why?” The simple answer is MLOps unlocks the full potential of DevOps projects and machine learning models, transforming them from novelties to production-level powerhouses.

With the ability to extract actionable insights from vast amounts of data, MLOps guarantees businesses a competitive edge, ensuring the seamless integration of ML models into business operations – enabling companies to innovate faster and make accurate decisions.

However, successfully implementing MLOps in an organization isn’t easy. But don’t worry – Instatus has got you covered. In this article, we’ll delve into six MLOps best practices to take your machine learning operations to the next level. Let's get started!

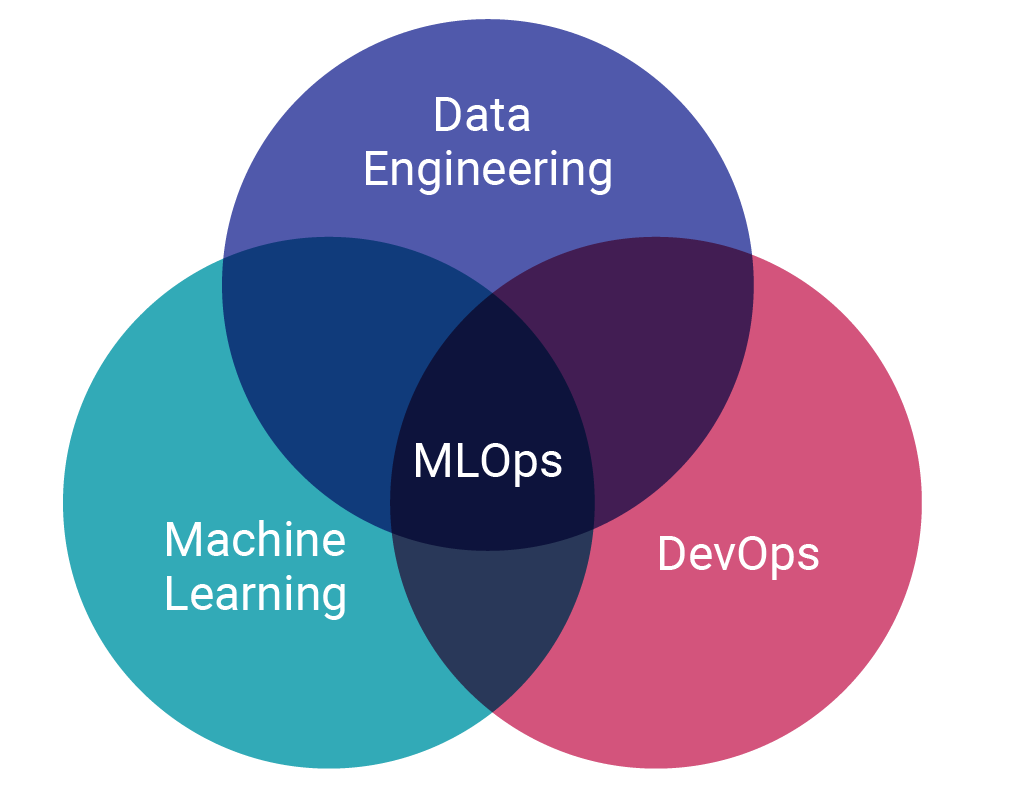

What is DevOps machine learning?

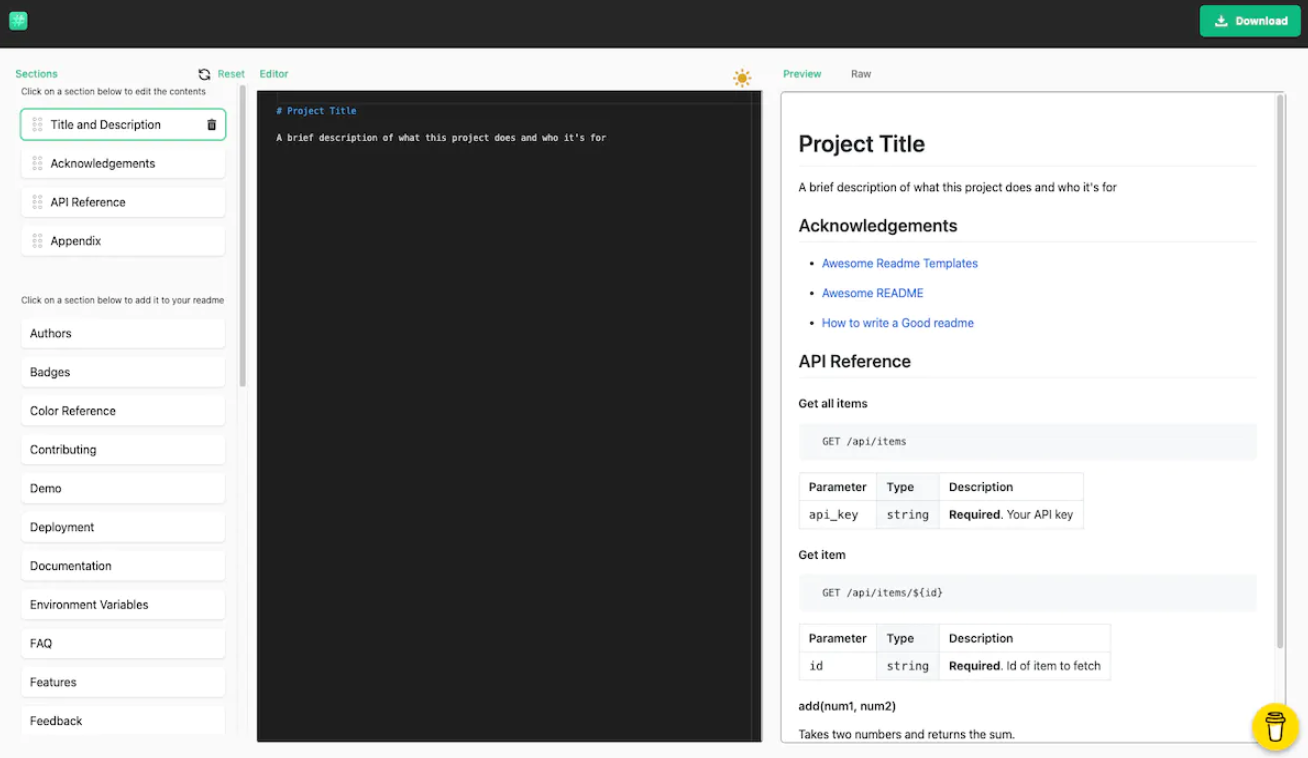

At its core, DevOps for Machine Learning brings together the development and operations aspects of machine learning, including continuous integration, delivery, and deployment – along with their ongoing monitoring and maintenance.

Just like DevOps in software development, the goal of MLOps is to shorten the life cycle of model development while streamlining the process of machine learning in a production environment.

MLOps aims to create a collaborative environment where data aficionados, ML engineers, and DevOps teams can work together efficiently, ensuring machine learning models are deployed rapidly, reliably, and responsibly.

MLOps project ideas

Diving into the world of MLOps can seem daunting, but it’s less about taking a massive leap and more about starting with manageable, impactful projects. Here are some project ideas that showcase various aspects of MLOps:

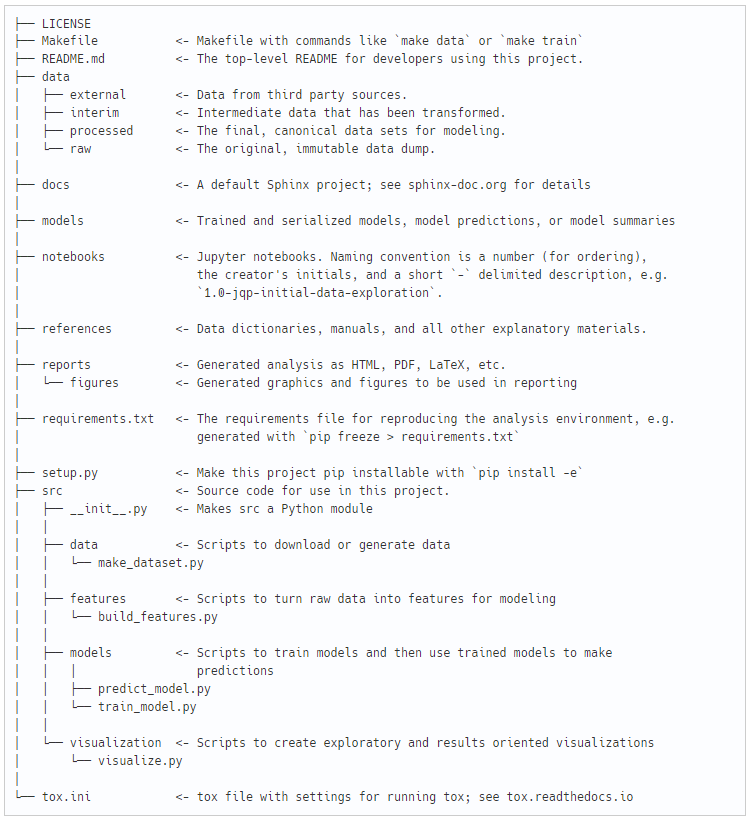

Improving project structure – cookiecutter

One illuminating takeaway is the impact MLOps has on the structure of your data science projects – a winning tool that can help you achieve this is cookiecutter.

Cookiecutter data science simplifies the creation of real-world machine learning applications. It enables you to start, design, and share analysis more efficiently and maintain a consistent and organized structure across your ML projects – all key components of MLOps.

Streamline MLOps documentation – readme.so

A common challenge many data scientists face is adequately documenting their projects, leading to lost or forgotten information. Readme.md files are a central part of project documentation but can be tricky to craft effectively – that’s where readme.so can help.

This intuitive editor offers real-time assistance, simplifying the process of creating comprehensive markdown files while enabling clear communication regarding structure, components, and how to use or reproduce it.

Practical machine learning – MovieLens Dataset

In the era of streaming, smart recommendations curate a list of suggestions based on your viewing history and recommendations.

Whether you’re comfortable with Python or Java, you can flex your coding skills with MovieLens Dataset. This dataset is like a treasure chest for movie buffs, brimming with over a million movie ratings and contributions by 6,000 plus users.

Such a project provides an opportunity to apply MLOps principles. You could utilize cookiecutter to structure the project and readme.so to document your process. As more and more users contribute, you’ll get a taste of the ongoing nature of machine learning in action.

MLOps vs. DevOps

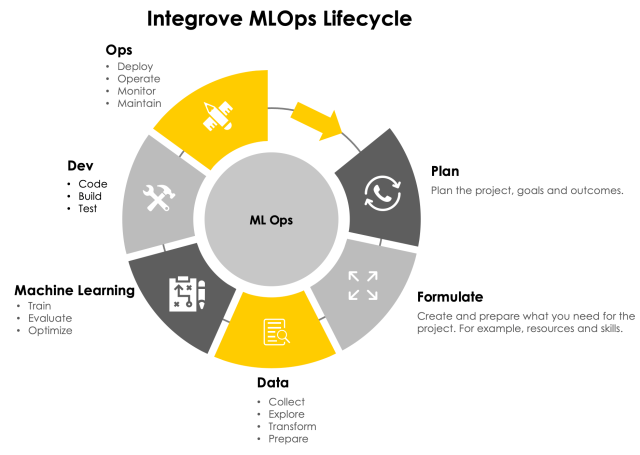

DevOps, a combination of “Development” and “Operations,” is rooted in agile development methodology, working towards reducing the DevOps lifecycle while delivering high-quality software.

However, with machine learning (ML) becoming a critical component of applications, the DevOps strategy needs to evolve – and that’s where MLOps comes in:

- Incorporating machine learning: MLOps adapts the DevOps model to accommodate machine learning better. While the core workflow and goal remains the same, machine learning projects bring new requirements and nuances that aren’t present in traditional DevOps.

- The role of data scientists: Unlike DevOps teams, where software developers are pivotal, data scientists are at the forefront of MLOps. They’re responsible for data coding the ML model and training it to deliver expected outcomes.

- Monitoring and retraining: Monitoring in MLOps is not only about ensuring availability and performance, as in the DevOps model, but also about detecting model drift. Model drift occurs when new data deviates from expectations, skewing results. Regular retraining of ML models is necessary to mitigate this risk.

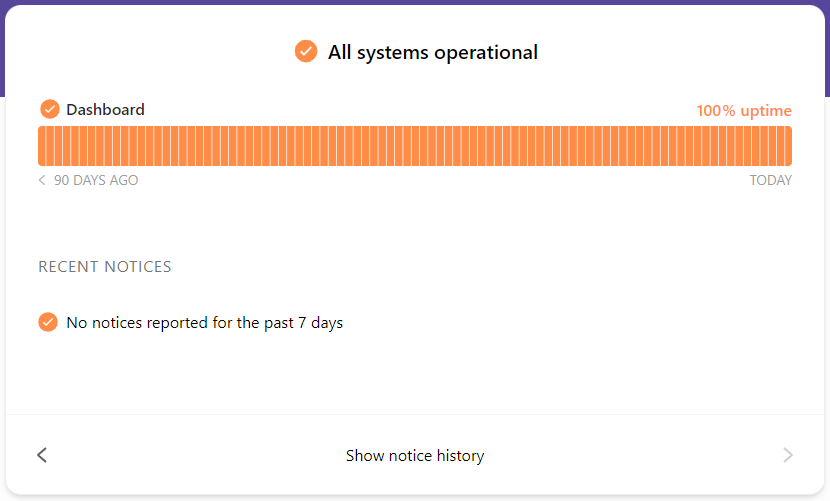

As both MLOps and DevOps experience downtime, Instatus emerges as a valuable application. Our status page builder integrates with numerous monitoring tools so that when issues arise, Instatus automatically sends out notifications and updates your status pages.

By providing real-time updates and winning communication, Instatus keeps everybody in the know, maintaining high levels of customer experience, even during downtime.

Why is MLOps important?

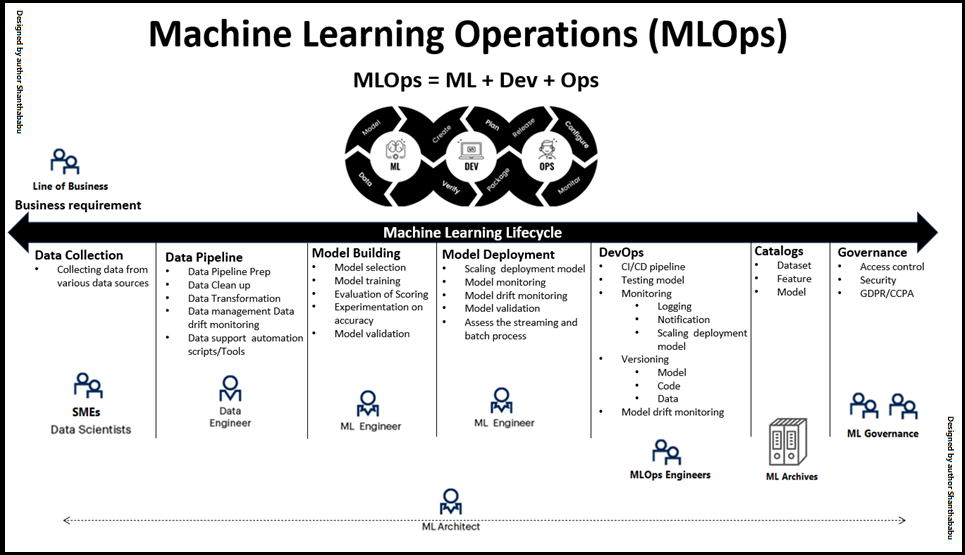

The main goal of MLOps is to streamline the lifecycle of machine learning models, from development to deployment and maintenance. With the growing popularity of machine learning operations, understanding its importance is essential. Here’s why:

- Establishing automated workflows

MLOps addresses the labor-intensive, repetitive tasks in the machine learning lifecycle. It automates the entire workflow, from data collection to model development, testing, retraining, and deployment.

- Increasing collaboration

MLOps brings in practices that standardize ML workflows, creating a unified language that data scientists, engineers, IT, and business professionals can all understand – eliminating compatibility issues and accelerating the whole process.

- Ensuring model quality

MLOps includes continuous monitoring of models in production to ensure performance doesn’t degrade over time.

- Cost savings

By automating many time-consuming tasks, resources are allocated much more efficiently. Furthermore, the reliability and predictability of ML workflows help avoid costly errors.

MLOps best practices

Integrating MLOps into your workflow boosts the efficiency of developing, deploying, and maintaining machine learning models. To fully harness its power, it’s crucial to adhere to a set of best practices – many take inspiration from DevOps’ best practices. Let’s take a look:

Define business objectives

Naturally, your objectives should provide a clear roadmap of your aims. Craft your goals following the SMART (Specific, Measurable, Achievable, Relevant, Time-bound) framework, tailored to your organization's unique needs.

In the machine learning lifecycle, key performance indicators (KPIs) help transform a business query into a machine learning solution, answering the question, “What do we want to derive from the data?”

If a model lacks clear objectives during development, data science teams may struggle to demonstrate its value to stakeholders. To avoid this, turn broad business questions into specific performance metrics – such as Mean Time to Repair (MTTR) and Mean Time to Detect (MTTD).

Set a standard with quality control

Setting MLOps quality standards plays a pivotal role in MLOps, including code, data, and models. Implement practices such as code reviews, data quality checks, and model performance evaluation to ensure everything meets pre-defined standards.

Additionally, use version control for all elements to track changes and enable easy rollback. This is key in minimizing technical debt, ensuring that all parts of the pipeline function smoothly while mitigating lack of standardization pitfalls.

Validate your data sets

Data serves as the lifeblood of all machine learning projects, with its quality directly impacting the efficiency of your models. Data validation is your initial step, enhancing the ML model’s predictive capabilities by ensuring high-quality data sets.

Effective data validation includes identifying and removing duplicates, managing missing values, filtering biases, and cleaning the data. Undertaking these steps bolsters your model’s accuracy and optimizes the time spent on the overall process.

Establish naming conventions

Establish clear naming conventions for your datasets, models, and other elements involved in the ML lifecycle. This makes it easier for team members to find what they need and aids in understanding the relationships between different components.

This approach helps manage the “Changing Anything Changes Everything” (CACE) principle and accelerates familiarity between engineers and your team.

Proper naming conventions also convey relationships between different components of the system. For instance, in a machine learning pipeline, if datasets and models are named logically and sequentially, it becomes easier to trace the flow of data.

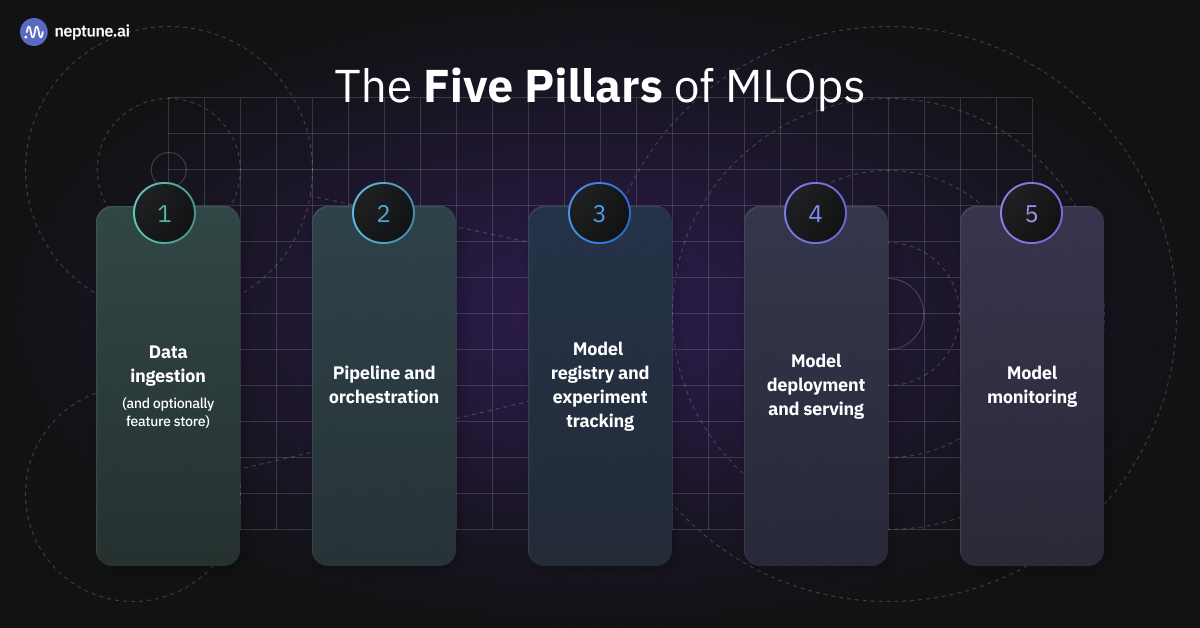

Strategically select your MLOps toolkit

Every MLOps tool isn’t designed the same, and the selection should be strategic for ensuring long-term success. Factors to consider while building your MLOPs toolkit include your business objectives, budget, MLOps tasks, and data set types.

Consider assembling a diverse range of MLOops tools, each equipped with unique features to handle different stages of the machine learning lifecycle.

Data monitoring performance

Data monitoring involves keeping track of model performance metrics, data quality, and system health over time. This is your safety net, designed to quickly detect any emerging issues and identify bottlenecks in your ML workflows.

Active and passive monitoring is crucial as models can drift over time due to changes in underlying data. By monitoring your models, you can detect any degradation in performance and retrain your models as necessary.

As you interact with real-world data and situations, you may experience changes or challenges that were not present during the development stage. By continuously tracking key metrics, you can provide valuable insights into these unknown issues.

Shape your future with MLOps

MLOps is not just a buzzword, it’s an evolution in the world of machine learning and DevOps. By bridging the gap between the two domains, it streamlines the development, deployment, and maintenance of machine learning models, fostering collaboration and efficiency.

Instatus enhances the MLOps experience by providing real-time status updates while integrating with your existing MLOps toolchain. We aim to keep everyone in the loop when issues arise, helping you remain transparent to your team and users.

Take your MLOps status page to the next level and sign up for a free Instatus account today!

Get ready for downtime

Monitor your services

Fix incidents with your team

Share your status with customers